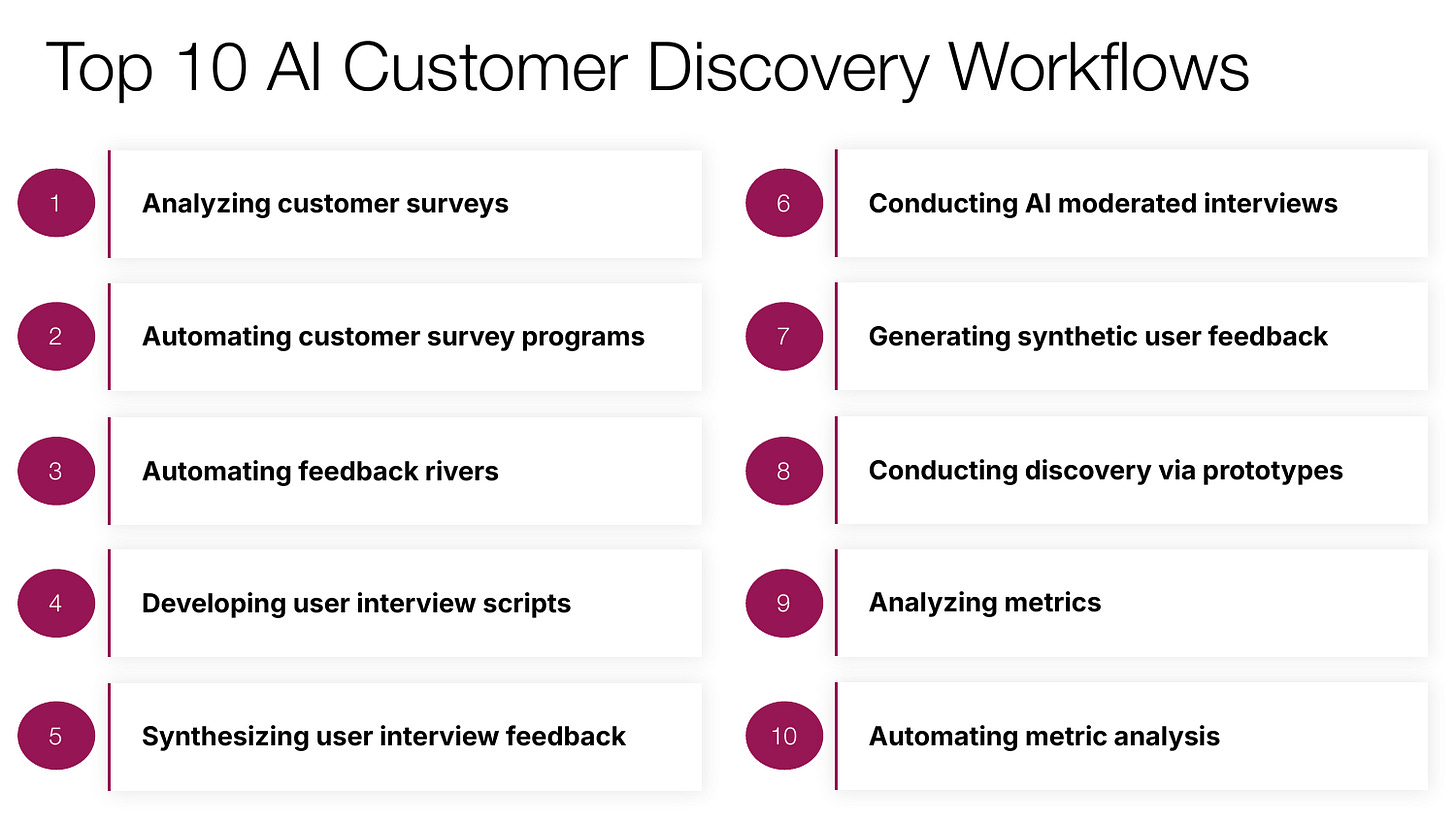

AI Powered Customer Discovery

10 ways to accelerate your team's customer discovery process with AI

Hey there 👋 This guide is a preview of some of the content from my course, AI Productivity, which is designed to help product managers bring AI fluency to every aspect of their role, whether it’s customer discovery, prototyping, product strategy, or execution. Next cohort starts April 7th. Learn more.

Andrew Ng, the founder of Coursera and DeepLearning.ai, recently made a provocative statement: the bottleneck in product development is no longer engineering, it’s now product management. For the entire history of product management, we’ve been waiting on engineers to build the product. But with AI coding tools like Claude Code, Cursor, and Codex, engineering teams now have extraordinary leverage. The delivery side of product development has been dramatically accelerated.

The challenge is that building great products has always been about both delivering the solution and discovering what’s worth building. So far, product teams are not seeing the same acceleration on the discovery side as we are on delivery. In fact, I’m now seeing product managers who simply can’t keep up with their engineering counterparts. When that happens, one of two bad outcomes typically transpires. Progress is either meaningfully constrained or engineering teams are simply shipping features that haven’t actually been validated with customers. No one is happy with either outcome.

The exciting news is that AI is now emerging as a powerful accelerant for customer discovery as well. These tools and capabilities aren’t yet as advanced as the coding tools on the delivery side, but they are rapidly improving.

Given the discovery constraint, I conducted a deep dive to understand just how teams could leverage these new AI powered discovery workflows. I’ve been experimenting with these approaches on my own product management process at Notejoy and I’ve found 10 AI workflows that are genuinely transforming how I approach customer discovery.

In this comprehensive guide I’ll walk you through each of the workflows, share how they meaningfully accelerate my discovery efforts, the AI tools I’ve found most useful for them, as well as best practices for getting great results.

My hope is that you’ll walk away with a handful of new AI powered workflows that you can immediately bring back to your team to accelerate your own discovery efforts.

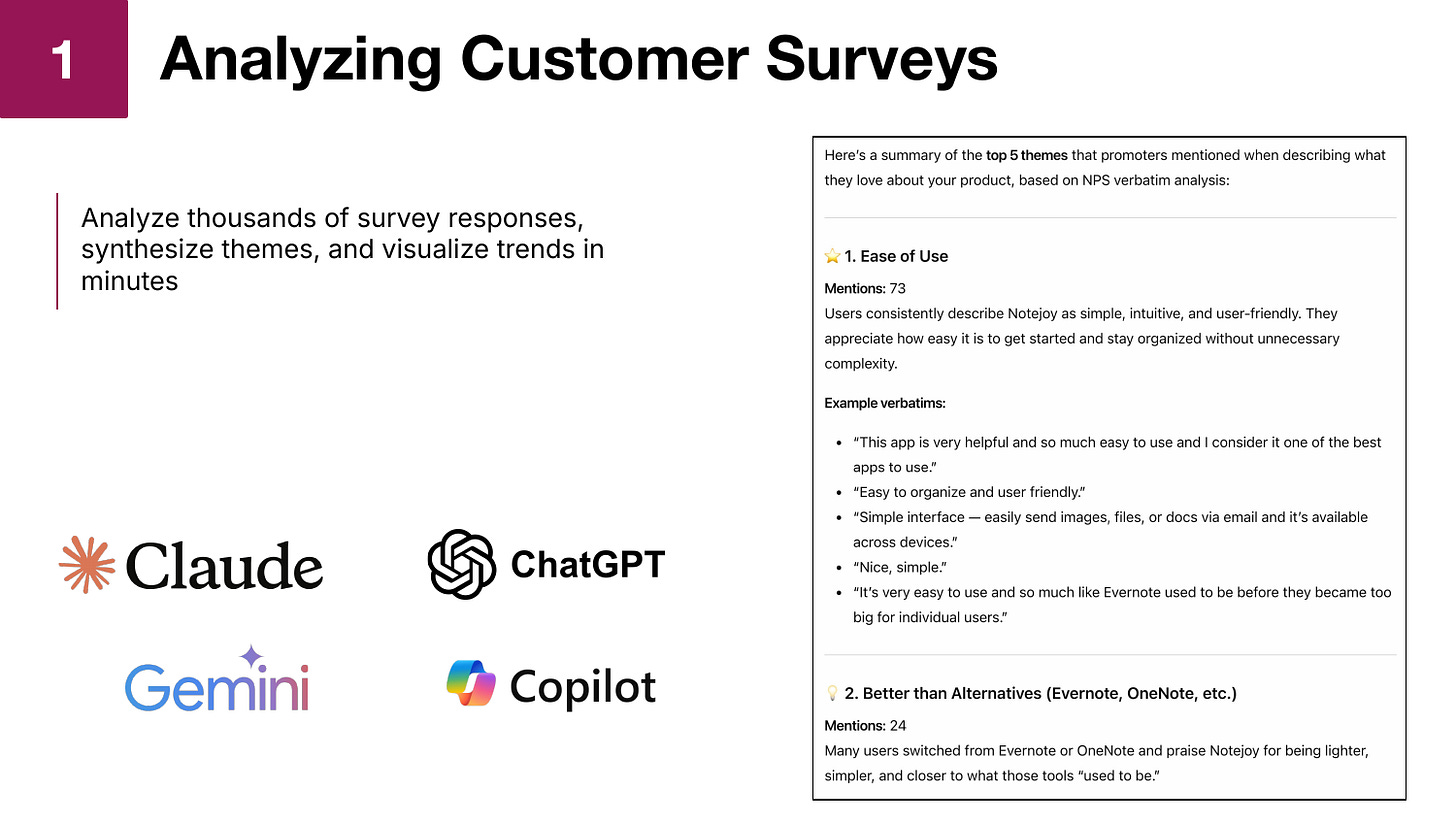

Analyzing Customer Surveys

The first workflow is using AI to synthesize feedback from customer surveys. You can now take thousands of survey responses, have AI synthesize the most frequent themes, and visualize the results in minutes.

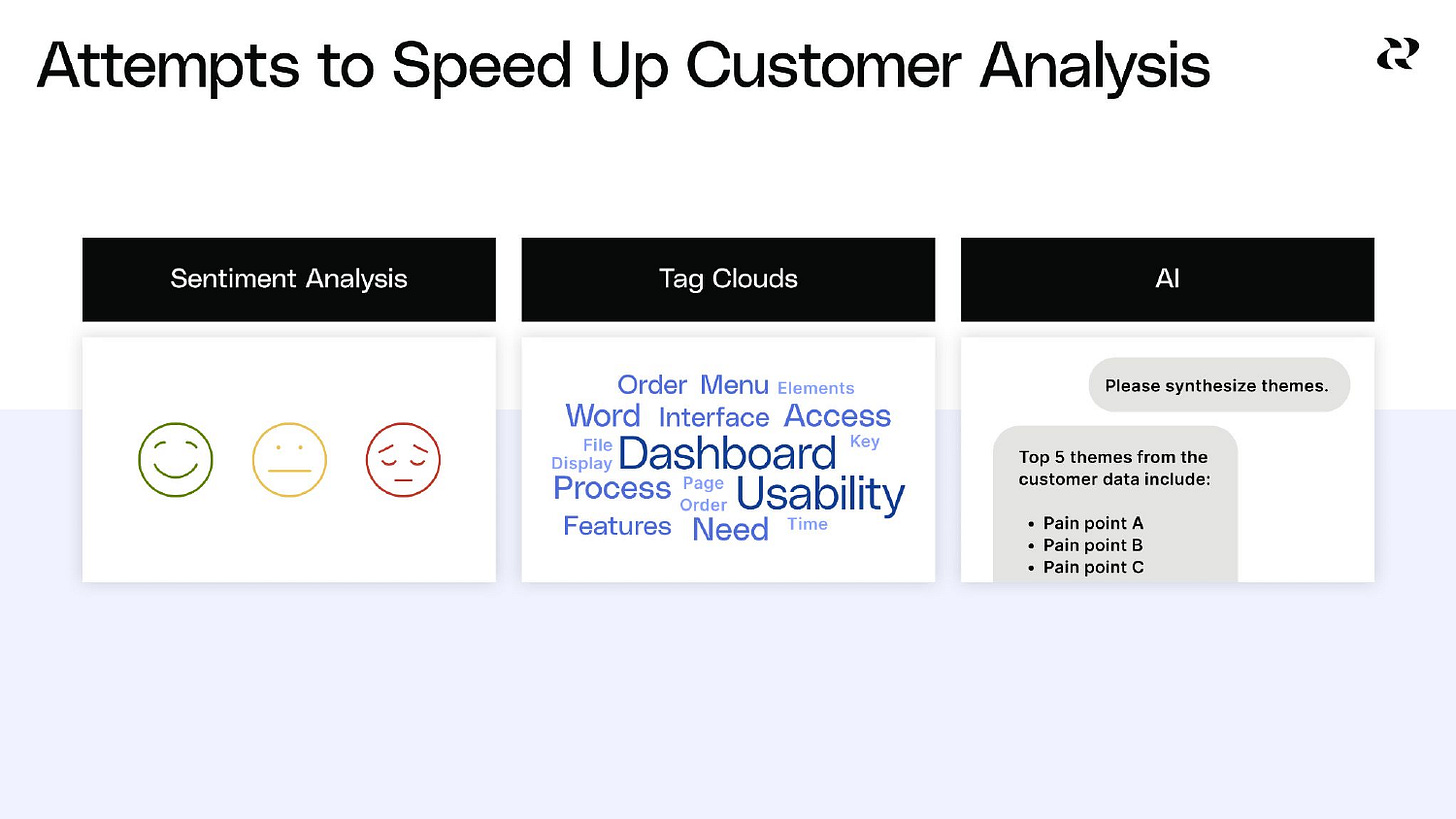

I think sometimes, because I now use AI every day, I have to remind myself just how revolutionary this actually is. Just a couple of years ago, the best technology we had for analyzing surveys was sentiment analysis, which could tell you whether a response was positive, neutral, or negative. Not particularly actionable. We then moved to tag clouds, which surfaced frequent keywords across the surveys. Again, still not actionable enough. It still meant that someone had to read every survey response, collate the feedback, and manually create themes. I did this work all the time.

But now generative AI has actually become incredibly good at taking raw survey responses and generating thematic insights. If you tried this a year ago and thought the results weren’t very good, it’s night and day improved. I’ve compared my own manual synthesis of customer survey themes against AI-generated synthesis and not only does it find very similar themes, but has even surfaced valuable insights I hadn’t initially spotted myself.

The practical impact of this capability is enormous. When I ran NPS surveys at LinkedIn, I could only do it quarterly because I had a team of marketers spending an entire week gathering results and reading through a thousand survey responses. Now I can run NPS surveys far more frequently.

What’s equally powerful is that AI allows you to run unlimited segmentation analyses. At LinkedIn, I might ask my team for two or three segmentation cuts. Now I can run 15 segmentations because it only costs me another line of typing. I can segment by usage patterns, by subscription type, by email domain, and even ask for statistical significance calculations on each comparison. All of this is automatically done for me.

All of the popular AI chatbots — ChatGPT, Claude, Gemini, and Copilot — are great for analyzing customer surveys, so you can easily leverage whatever your team is already using to accomplish this.

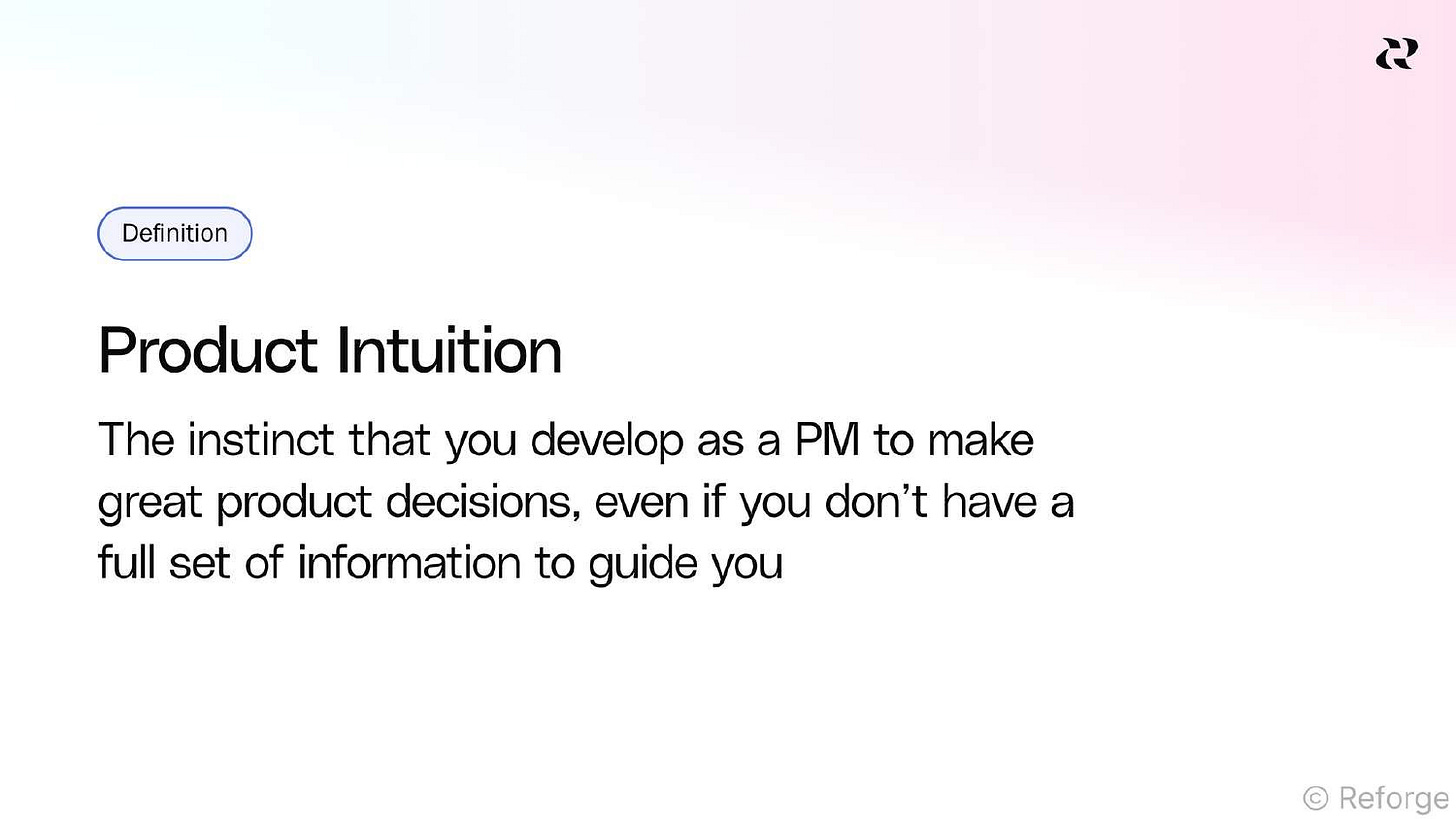

One word of caution here. As product managers, we need to continually build our product intuition to enable us to make smart decisions every day in the absence of data. I like to think of product intuition as our own machine learning model that we build by reading customer feedback, pattern matching across it, and synthesizing those patterns into working insights. AI is great at this, but we need to stay in the loop. That means when AI generates themes, I always ask it to show me the exact customer verbatims underneath each theme. I’m not just relying on its commentary and summarization. I want to directly hear and interrogate the voice of the customer. By consuming that direct feedback, I continue to build my own intuition while relying on AI to do the heavy lifting of aggregation and summarization.

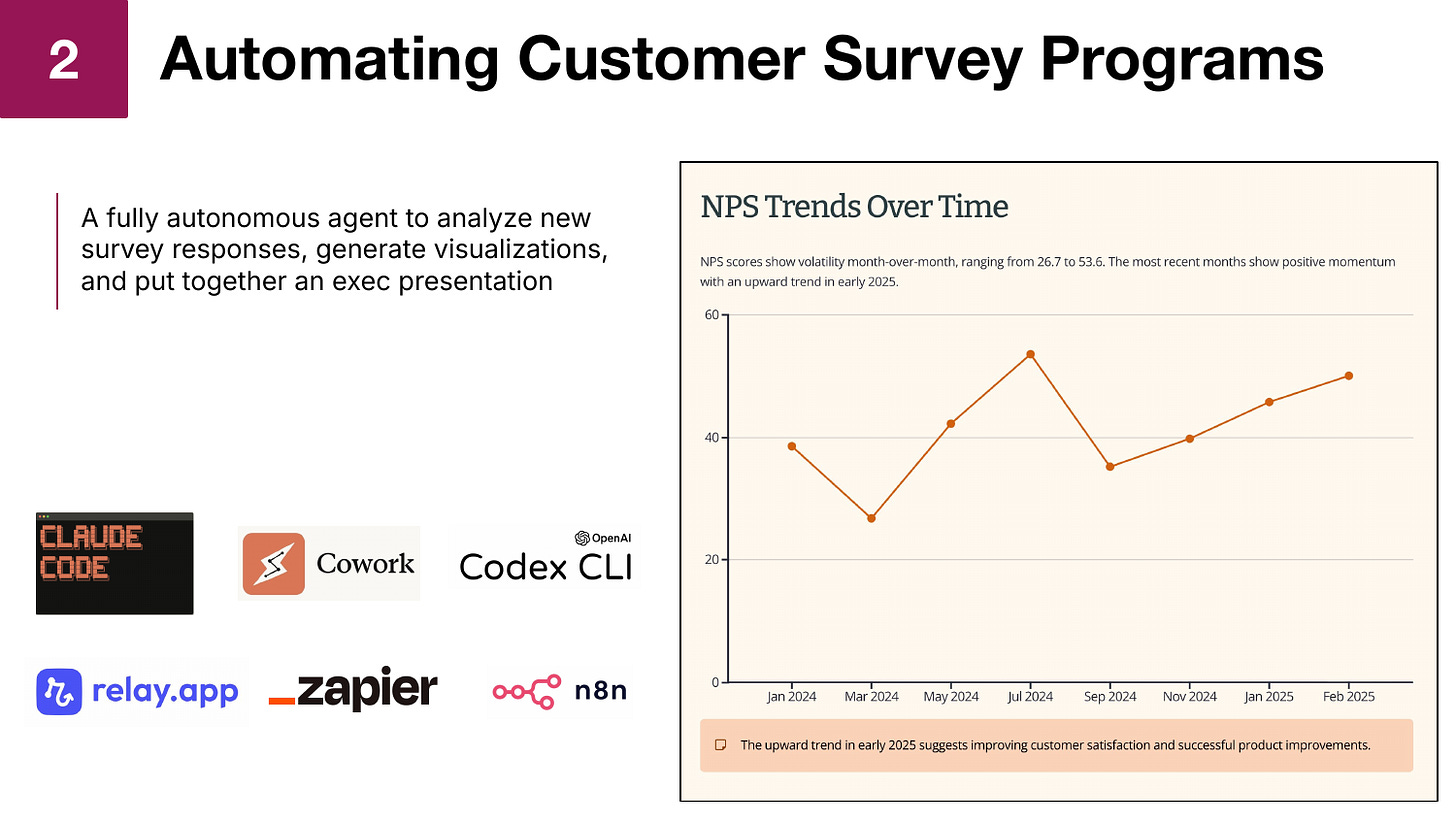

Automating Customer Survey Programs

The ad hoc analysis I described above is incredibly powerful and useful, but I’ve taken it a step further by fully automating the process with an autonomous agent that produces weekly NPS survey reports for me without any manual intervention.

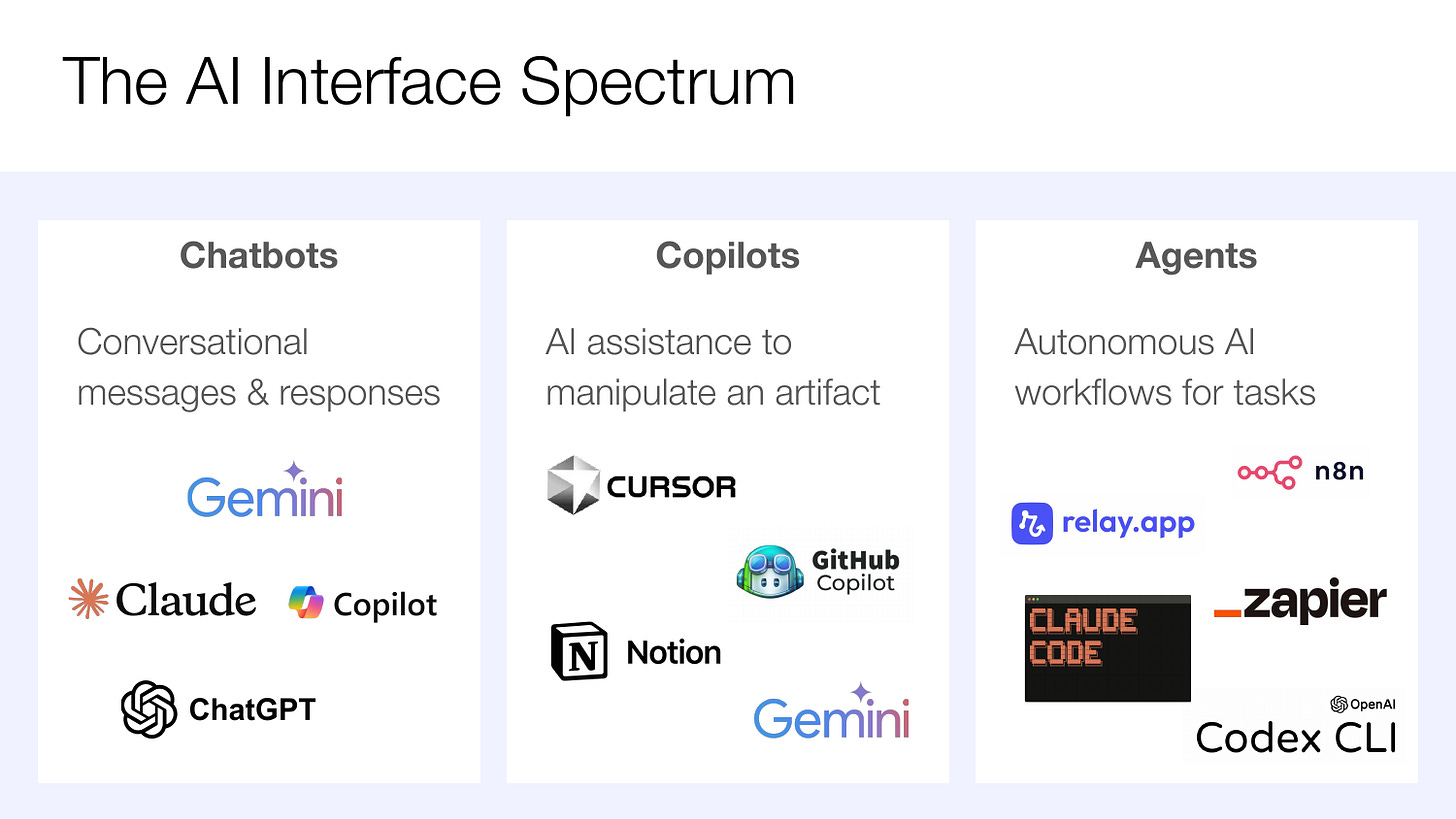

To understand this approach, it’s helpful to think about the full spectrum of AI interfaces that now exist. We started with chatbots, which have now become ubiquitous. With these tools, you give them a prompt and get back a quick answer. We then innovated with the introduction of copilots, where AI shows up as a sidebar to help you directly manipulate an artifact, whether that’s code in Cursor, a Notion document, or a Google Sheet. The third category, which is really taking off right now, are agents: autonomous AI workflows that can run end to end without requiring your input.

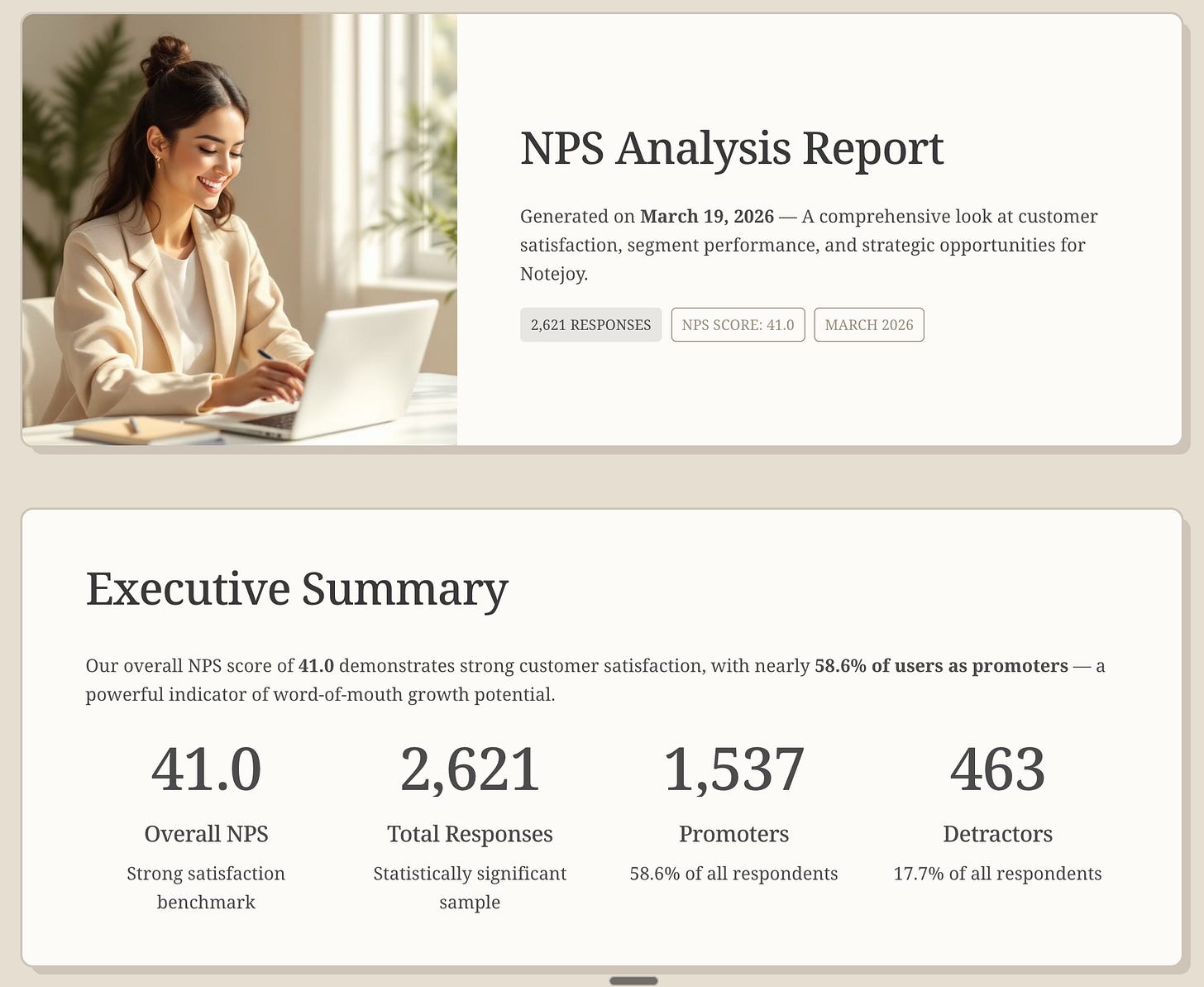

I’ve built an agent using Claude Code that takes my NPS survey results file, runs the full numerical analysis, generates promoter and detractor verbatim themes, produces an interactive HTML report, and even creates an executive presentation using Gamma, an AI presentation tool. The entire workflow is defined in a skill file written in plain English that describes each step the agent should follow.

The output is a fully automated executive report that includes NPS scores, interactive trend graphs, segmentation analysis, verbatim themes, voice of customer quotes, and even product improvement recommendations. I used to produce this kind of report quarterly at LinkedIn because it took a team of real people a week to put together. Now I do this weekly. I just download the latest NPS results, kick off the agent, and get a polished report delivered automatically.

I’ve come to prefer coding agents like Claude Code and Codex as my preferred way of building agents these days. But an equally viable approach is to leverage the workflow automation tools like Relay.app, Zapier, and n8n for building end-to-end autonomous agents. I’d encourage you to try building a workflow both ways and deciding which approach feels easier to you.

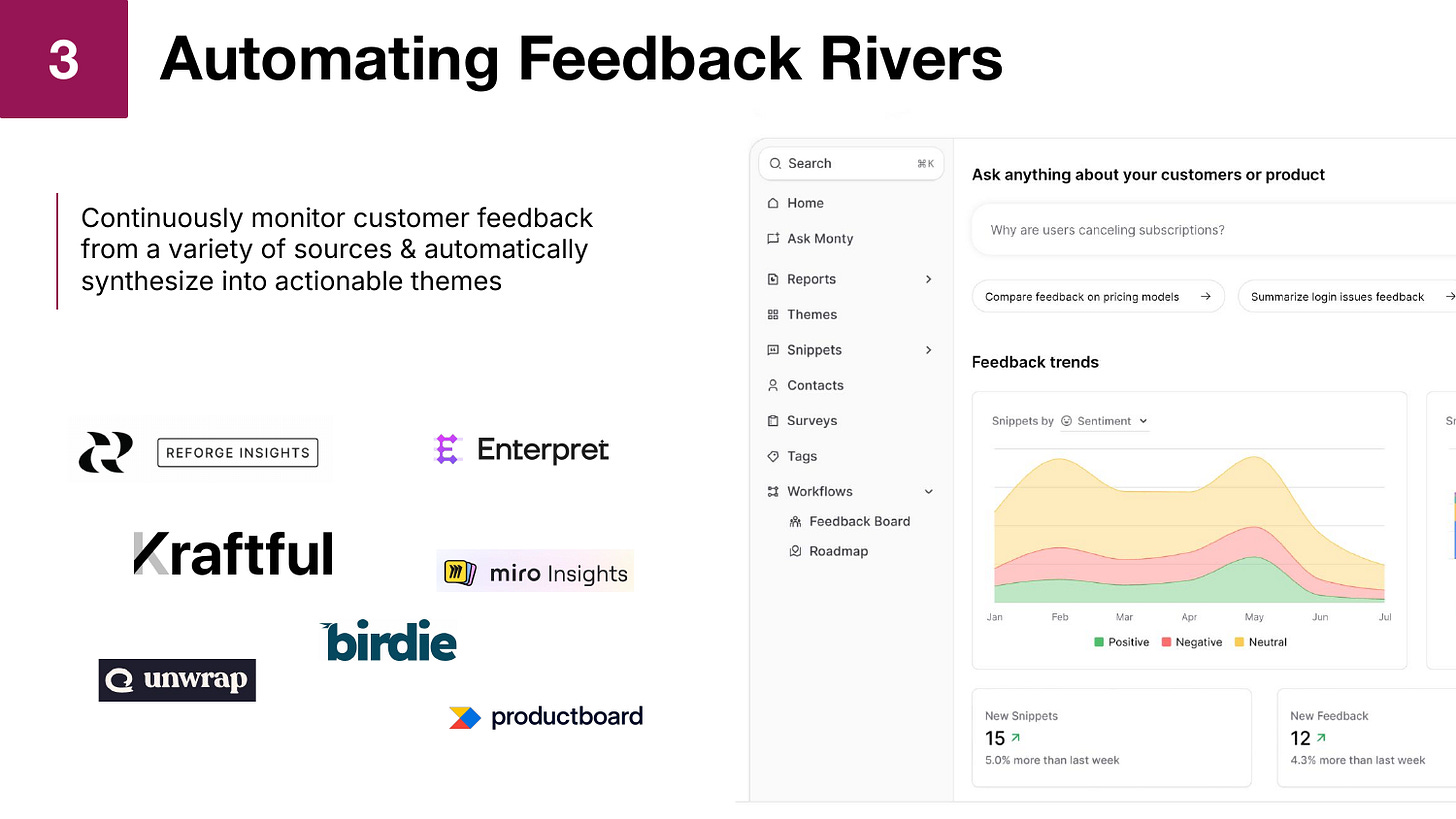

Automating Feedback Rivers

There’s a new category of tools that have recently emerged that are fantastic at customer discovery that I call feedback rivers. The idea is simple: aggregate customer feedback from a variety of sources, put it all in one place, and continuously monitor it. Maybe it’s pulling in your App Store reviews, Google Play reviews, G2 reviews, Zendesk tickets, or even Reddit discussions. The tool aggregates all of it and uses AI to automatically synthesize themes across all that feedback.

There are many tools in this category, including Reforge Insights, Enterpret, Kraftful, Birdie, and Productboard Pulse. They all have different features, but the core value proposition is the same: automated, continuous insight into customer sentiment across every channel.

What I find particularly valuable about these tools is the ability to see themes trending over time. If I see an issue trending up, I might decide to then take it more seriously. After we implement a fix, I can see in real time whether the volume of related complaints actually is going down. It gives me a continuous pulse on what’s happening with my customers.

I can also click into any theme and see exactly which customers said what. If we decide to investigate an issue further, I know exactly who to reach out to. I have their email address and can send a targeted outreach to 20 customers who experienced the same pain point. In this way, these tools act as a feedback CRM, allowing me to understand exactly who is experiencing particular issues, the value of those particular customers to our business, and the ability to close the loop to turn a feature implementation into a customer win.

Keep reading with a 7-day free trial

Subscribe to Sachin Rekhi to keep reading this post and get 7 days of free access to the full post archives.